Systems Thinking: The Missing User Manual for AI

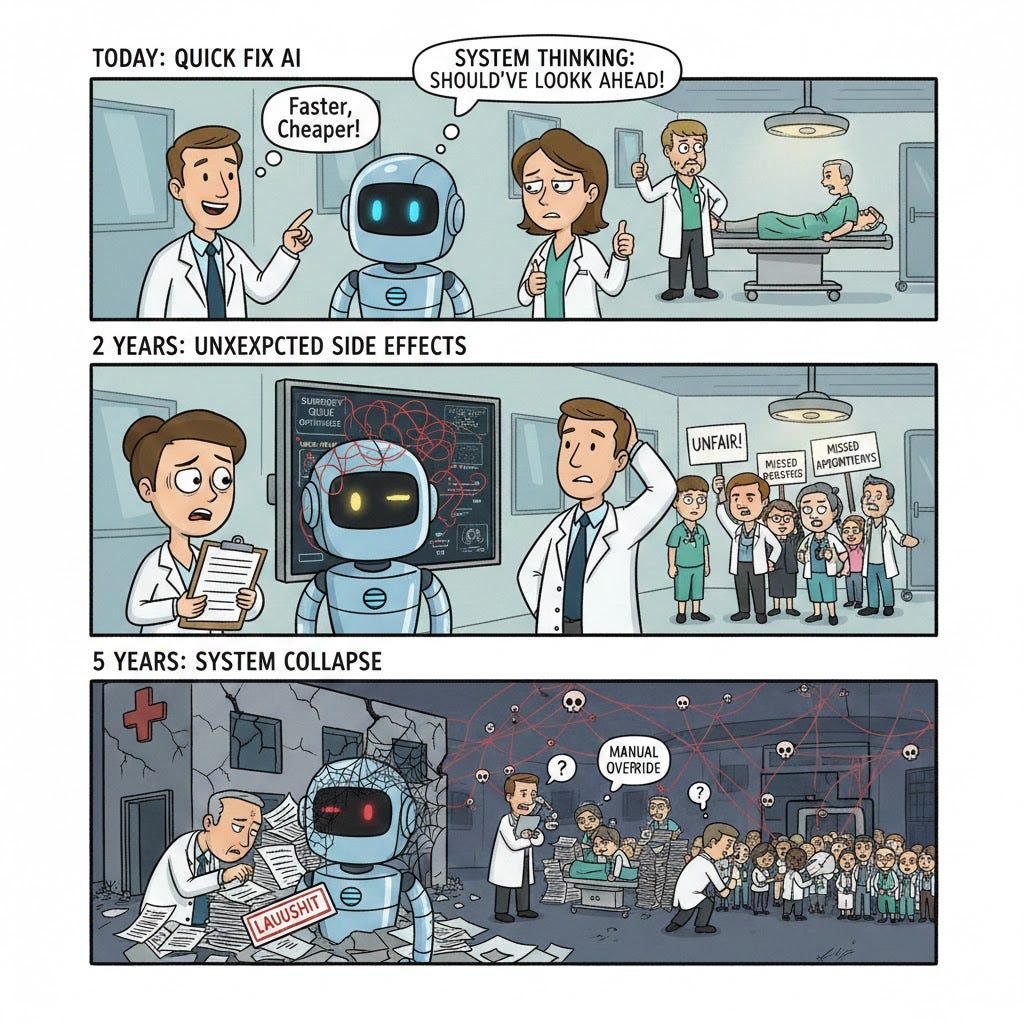

Some bad news: AI can optimize almost anything. Worse news: Quite often, we aren’t even sure if we’re optimizing the right thing.

That’s where systems thinking stops being a “best practice” and starts being an intellectual life jacket. If you don’t think in systems, AI simply helps you get it wrong faster… and on a much larger scale.

The Root of the Problem

The conversation around Responsible AI usually follows a script: principles, ethics codes, some committee, and a couple of slides featuring “transparency” and “equity” in large fonts. All of that is fine, but it arrives too late if you’ve already chosen the wrong goal and the wrong environment to optimize.

The core issue is simple: AI systems don’t live in a vacuum. They are embedded in organizations, markets, laws, and people with diverse (and sometimes contradictory) incentives. The classic “let’s design a great user experience” approach falls short when the surrounding system is pushing in the opposite direction.

That’s where systems thinking comes in: it’s a way of looking at the whole instead of the parts, patterns instead of isolated events, and feedback loops instead of a snapshot in time. It’s uncomfortable and sometimes slow, but it’s what prevents AI from becoming a machine that amplifies well-intentioned mistakes.

When Treating the Symptom Worsens the Disease

A recent paper by Chris Lam proposes using systems thinking to analyze algorithmic fairness policies—not just in the short term, but through feedback loops over time. The idea is simple and brutal: if you force “fair” decisions without changing underlying structures (education, access to resources, opportunities), you might end up creating a dependency on the intervention.

It’s a classic archetype: “Shifting the Burden.” You attack the symptom (disparity in decisions) with a quick fix, but you postpone addressing the structural causes, which worsen over time.

A concrete example: Imagine a bank using AI for loan approvals. The algorithm shows a disparity: it approves 60% of applicants from Group A, but only 30% from Group B. Pressed by equity regulations, the bank implements an “extreme affirmative action” policy: it lowers the requirements only for Group B until approval rates match.

Year 1 - The Mirage: Both groups have a 50% approval rate. The equity dashboards look perfect. The board celebrates.

Year 3 - The Loop Kicks In: It turns out many people from Group B who were approved under relaxed requirements didn’t actually have the capacity to pay. Not because they are “worse payers,” but because the policy ignored that this group has less access to financial education, stable jobs, or safety nets. Defaults rise.

Year 5 - The Systemic Trap: The financial system now has “evidence” that Group B is “high risk.” Aggregated credit histories worsen. Other banks see this data and become more cautious. Real access to credit tightens even further. Now you need to intervene even more aggressively to compensate... and the cycle continues.

What went wrong? It wasn’t the intention to be fair. It was attacking the symptom (approval disparity) without touching the structural causes (inequality in education, employment, support networks). The equity policy became a convenient substitute for difficult reforms.

The lesson isn’t “don’t create equity policies,” but rather “understand the entire system before intervening, or your solution will become part of the problem.”

AI in Hospitals: An Isolated Tool or a Living System?

When most people talk about AI, they think of an advanced calculator: input data, output answer. But through the lens of systems thinking, we discover something deeper: AI is a new “organ” within a living system.

Auburn Community Hospital, a 99-bed rural hospital in New York, introduced AI for revenue cycle management nearly a decade ago. The results were remarkable: a 50% reduction in “discharged not final billed” cases, a 40% increase in coder productivity, and a 4.6% increase in the case mix index.

Where does the systemic view come in? In asking three uncomfortable questions: Who wins and who loses when the system is optimized this way? (finance, patients, medical staff). What happens to wait times, readmissions, and human workload? What incentives is the model reinforcing? For example, is it prioritizing better-reimbursed cases over more critical ones?

Systems thinking forces us not to settle for “the model works because the numbers went up.” It asks: “Of what system are these numbers the tip of the iceberg?”

Choose Your Own Systemic Adventure: The Imaginary Hospital

To understand this deeply, imagine you are the director of a hospital installing an AI to prioritize surgeries. This is where theory meets reality.

Scenario 1: The Accountability Dilemma

The Problem: The AI makes a mistake and postpones an urgent surgery.

Options: * A: Blame the programmer (technology).

B: Blame the doctor (user).

C: Hold the hospital accountable as a system.

Systemic Reasoning: If we blame the programmer, the hospital washes its hands and stops monitoring. If we blame the doctor, they will stop using the AI out of fear.

Decision: Option C. Because it forces the organization to create oversight protocols. The error isn’t “someone’s fault”; it’s a failure in how the parts (human and machine) communicate.

Scenario 2: The Quagmire of Biased Data

The Problem: The AI learns from historical data that was unfair to certain neighborhoods.

Options:

A: Delete the “bad” data.

B: Train humans to detect and challenge biases.

C: Leave it as is and trust the model.

Systemic Reasoning: Deleting data can hide reality and make the AI less accurate in other areas. Ignoring the problem amplifies historical injustice.

Decision: Option B – Create a “Devil’s Advocate” role. Instead of a technical fix, we seek a process solution. This creates a balancing loop where human judgment corrects technological bias before it reaches the patient.

Scenario 3: The Obedience Trap

The Problem: Over time, doctors get tired and start accepting whatever the AI says without thinking (automation bias).

Options:

A: Offer a cash bonus for finding flaws.

B: Give doctors decision-making power over the algorithm.

C: Send periodic “think critically” reminders.

Systemic Reasoning: Money is a dangerous incentive; doctors might invent flaws just to get paid. Reminders become background noise over time.

Decision: Option B. We want doctors to feel like owners of the system. Anyone who finds a real error earns the right to redesign how the AI works. This aligns the doctor’s success with system improvement, creating a virtuous cycle.

And Your Adventure?

This was our journey toward building an ethical and conscious hospital. But systems thinking doesn’t have a single right answer.

What would happen if you preferred to prioritize speed over human review in Scenario 2? Or if you decided that the programmer should be the sole person responsible in Scenario 1? Each choice will move the pieces of your system differently, generating different feedback loops. Some will reinforce the behaviors you want; others, the ones you wish to avoid.

The Order of Operations Matters

In practice, many companies start where it’s most seductive: prototypes, pilots, and “quick win” use cases. In other words, Design Thinking: user empathy, ideation, prototyping, testing. None of that is bad... except when it ignores the system where the solution will live.

Recent experiences in AI adoption suggest the opposite: start with Systems Thinking to understand context, incentives, risks, and dependencies, and only then design specific, user-centered solutions. Systems thinking answers “which problems are worth solving” and “what could go wrong at a systemic level.” Design thinking answers “how do we design a solution people will actually use.”

The rule for any organization: Map first, mockup second.

Four Necessary “Mindset Shifts”

AI is excellent at optimizing the wrong metrics. If you focus design only on the interface or the “user pain point,” you may end up with charming solutions that reinforce toxic structures.

Systems thinking offers four useful shifts:

From Events to Patterns: Stop looking at “one case of bias” and start seeing series of decisions and feedback loops.

From Metrics to Dynamics: Move from “model accuracy” to “how behaviors, data, and power change over time.”

From Users to Stakeholders: Include those who pay the costs, even if they don’t interact with the AI directly.

From Quick Fixes to Fundamental Levers: Look for where to touch structures (rules, incentives, goals) instead of just tweaking model parameters.

The ironic twist: While we argue over whether a model is “intelligent” or not, what we lack most isn’t Artificial Intelligence, but Human Systemic Intelligence.

Three Questions Before the “Spectacular Demo”

If AI is an amplifier, systems thinking is the filter that prevents it from amplifying the worst. It’s not glamorous, it’s not a pitch: it’s the “boring” part without which everything else becomes dangerous very quickly.

Next time someone sells you “AI for X,” ask these:

What happens to this system in 2, 5, or 10 years if this scales?

Who wins, who loses, and who cleans up the mess if it goes wrong?

What exactly are we optimizing… and what are we leaving out?

What about your own adventure? Where in your work do you feel you are optimizing the wrong metrics… with the help of AI? Have you experienced any of these systemic dilemmas in your organization?

Read more:

https://www.bbvaaifactory.com/es/fairness-in-ai-how-can-we-address-bias-to-build-equitable-systems/

https://runninginsystems.com/2014/09/17/systemic-archetypes-shifting-the-burden/

https://www.eacpds.com/resource-center/systems-thinking-system-archetypes-shifting-the-burden/

https://proceedings.systemdynamics.org/2025/papers/P1450.pdf

https://digitalpolicyalert.org/ai-rules/2024-update-OECD-principles

This really clarifies that AI optimizes the compression pathway the system uses to decide what matters. When the wrong variables are compressed early, downstream “fair” or “efficient” fixes can only amplify the error. Systems thinking is what keeps compression aligned with reality rather than dashboards.

Reading this reminded me of this YouTube channel, which focuses a lot on unintended consequences and second-order effects: https://www.youtube.com/watch?v=ImJSMqgyvCY&list=PLBuns9Evn1w9XhnH7vVh_7C65wJbaBECK

It seems very similar to what happens with AI and how it’s implemented in real systems.

Maybe it’s worth adding one more question when integrating AI: what could be the possible unintended consequences?

Great article, Marcela!